Section 3.2: Measures of Dispersion

Objectives

By the end of this lesson, you will be able to...

- compute the range of a variable from raw data

- compute the variance of a variable from raw data

- compute the standard deviation of a variable from raw data

- use the empirical Rule to describe data that are bell-shaped

For a quick overview of this section, watch this short video summary:

Consider the following two sets of exam scores:

|

|

||||||||||||||||||||||||||||||||||||||||||||||||||||||

|

|

Often, we want to compare two data sets and look for differences. In this case, however, the sample mean for the first set is 78.3, with a median of 78, while the second set has a sample mean of 78.7, also with a median of 78.

We can see that the measures of center are not enough to distinguish between the two sets, so we'll need to somehow compare their "dispersion", or spread. The first statistic we'll learn about to help do that is called the range.

Range

The range, R, of a variable is the difference between the largest and smallest data values.

Example 1

Looking at our data from above, we see that the range for the first set of exam scores is 99-48 = 51, while the range for the second set is 91-58 = 33. As we can see from the histograms, the second set of exam scores has less dispersion.

Unfortunately, the range isn't always enough to distinguish between two sets of data, which we'll see on the next page.

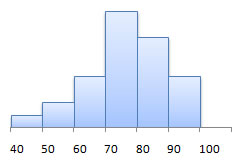

Let's look at another two sets of exam scores.

|

|

||||||||||||||||||||||||||||||||||||||||||||||||||||||

|

|

In this case, we can see that the range is R = 95-48 = 47 for both sets, but they clearly don't have the same dispersion. The second set is much more condensed, with the bulk of the scores in the C range - 70-79.

Variance

To describe the dispersion in cases like these, we'll need to somehow describe how far a "typical" observation is from the mean, rather than looking at the extreme values.

An obvious choice would be to just look at the average distance from the mean. The figure below shows the heights of the 2008 US Men's Olympic Basketball team and each player's corresponding difference from the mean. (Source: USA Basketball)

If we try to take the average difference from the mean, we have a problem - we get 0!

| 1.5 + 2.5 + 3.5 - 0.5 + 4.5 + 1.5 - 2.5 - 6.5 +2.5 - 0.5 - 2.5 - 3.5 | = 0 |

| 12 |

Why is this? Well, it's because the mean acts as a balancing point, as we talked about earlier. In fact, this will always happen - the average difference from the mean will equal zero.

So what do we do? The obvious choice would be to take the average distance from the mean. That is, take the absolute value of each of the differences. It's a good thought, but anyone who's taken calculus will tell you that absolute values can be pretty difficult to work with, so instead, we square them.

This creates a new measure of dispersion, called the variance.

The population variance, σ2, of a variable is the sum of the squared deviations about the population mean divided by N.

| σ2 = | (x1- μ)2 + (x2- μ)2 + ... + (xN- μ)2 | = | Σ(xi- μ)2 |

| N | N |

This is pretty complex, but we'll have technology to do most of the work for us.

Like the mean, we also have a sample version of this calculation, but unlike the mean, it's actually different.

The sample variance, s2, is computed by determining the sum of the squared deviations about the sample mean and dividing the result by n-1.

| s2 = | (x1- |

= | Σ(xi- |

| n - 1 | n - 1 |

The first thing most students ask when they see this (I did, too) is "Why n-1 instead of just n?" It's a good question, and a difficult to answer in plain English. The key is to look at the purpose of using the sample variance (or any sample statistics, for that matter). That purpose is to get an estimate for the true population variance.

Unless we have data for the entire population, our estimate will likely be incorrect. If we look at the average of all possible sample variances, though, that average should be the same as the population variance we're trying to estimate. In other words, we'll be wrong most of the time, but the average of all of our attempts will be correct.

The thing is, if we divide by N in the sample variance formula above, our estimate will, on average, be too low. (We can actually prove this mathematically, but it's pretty heady stuff. It's usually not covered until a graduate course in probability and statistics.) We call an estimate like this biased, since it consistently under-estimates the parameter it's trying to predict.

Interestingly enough, dividing by n-1 makes the estimate unbiased. (This can also be proven mathematically.) So it may seem like an odd thing to do, but there's very solid mathematical reasoning behind it.

Example 2

Let's refer back to the heights of the players on the US Men's Olympic basketball team, and let's treat this as a sample of all the basketball players in the US.

| Player | Height | xi- |

(xi- |

| Carmelo Anthony | 6'8" | 1.5 | 2.25 |

| Carlos Boozer | 6'9" | 2.5 | 6.25 |

| Chris Bosh | 6'10" | 3.5 | 12.25 |

| Kobe Bryant | 6'6" | -0.5 | 0.25 |

| Dwight Howard | 6'11" | 4.5 | 20.25 |

| LeBron James | 6'8" | 1.5 | 2.25 |

| Jason Kidd | 6'4" | -2.5 | 6.25 |

| Chris Paul | 6'0" | -6.5 | 42.25 |

| Tayshaun Prince | 6'9" | 2.5 | 6.25 |

| Michael Redd | 6'6" | -0.5 | 0.25 |

| Dwayne Wade | 6'4" | -2.5 | 6.25 |

| Deron Williams | 6'3" | -3.5 | 12.25 |

| 117 |

So the sample variance is then:

| s2 = | Σ(xi- |

= | 117 | ≈10.6 |

| n - 1 | 12 - 1 |

You may notice that I rounded the variance to the tenths place.

Typically, we round the variance to one more digit than the data

When necessary, round the variance to one more digit than the original data. i.e. If the data are whole numbers, you should round the variance to the tenths place (as in the previous example). If the data are already to the tenths place, you should round to the hundredths place.

Technology

Here's a quick overview of the formulas for finding variance in StatCrunch.

Note: If the data you are using is a population, be sure to select "Unadj. variance" or "Unadj. std. dev." to calculate the population variance or population standard deviation, respectively. |

| You can also visit the video page for links to see videos in either Quicktime or iPod format. |

One major problem with the variance is that the units don't really make sense. Take the previous example about the heights of the players on the 2008 US Men's Olympic Basketball team. If we look at the units for that variance, it's 10.64 inches squared. What does that have to do with the dispersion of the data? The data are in inches, not inches squared!

Standard Deviation

To remedy that, we need another measure of dispersion, called the standard deviation.

The population standard deviation, σ, is obtained by taking the square root of the population variance.

![]()

The sample standard deviation, s, is obtained by taking the square root of the sample variance.

![]()

So referring again to our previous example, the sample standard deviation is ![]() inches.

So we could then say that the "typical" player is about 3.3 inches different from the average

height of the team.

inches.

So we could then say that the "typical" player is about 3.3 inches different from the average

height of the team.

Now that makes more sense!

When necessary, round the standard deviation to one more

digit than the original data. i.e. If the data are whole numbers,

you should round the standard deviation to the tenths place (as in the

previous example). If the data are already to the tenths place, you should

round to the hundredths place.

You might also consider watching this video regarding rounding (in Quicktime or iPod format).

What Does It Mean?

So what can we tell from the standard deviation? Let's go back to those two sets of exam scores. Which one do you think has a higher standard deviation?

|

|

||||||||||||||||||||||||||||||||||||||||||||||||||||||

|

|

The first data set has a higher standard deviation.

It has a higher standard deviation because it is more spread out. That's the key:

The larger the standard deviation, the more dispersion the distribution has.

Technology

Here's a quick overview of the formulas for finding standard deviation in StatCrunch.

Note: If the data you are using is a population, be sure to select "Unadj. variance" or "Unadj. std. dev." to calculate the population variance or population standard deviation, respectively. |

| You can also visit the video page for links to see videos in either Quicktime or iPod format. |

One nice benefit of understanding the relationship between the standard deviation and the shape of the distribution is it helps us get a sense of how much of the data should be within a certain number of standard deviations.

In particular, if the distribution is bell-shaped, we can be fairly precise about what percentage of the data should lie within 1, 2, or 3 standard deviations.

The Empirical Rule

The Empirical Rule

If a distribution is roughly bell-shaped, then

- Approximately 68% of the data will lie within 1 standard deviation of the mean.

- Approximately 95% of the data will lie within 2 standard deviations of the mean.

- Approximately 99.7% of the data will lie within 3 standard deviations of the mean.

How do we know these percentages so accurately? Well, unfortunately we can't explain it until we get to Chapter 7, but because it fits so well in here with standard deviations, you'll just have to accept it on faith at this point!

It does give us interesting information, though. Let's look at an example to illustrate.

Example 3

IQ tests are generally designed to have a mean of 100 and a standard deviation of 15. It's also known that the distribution of EQ scores tends to follow a bell-curve.

With that in mind, approximately what percentage of individuals have IQs between 85 and 115?

About 68%. The mean is 100 and the standard deviation is 15, so 85 is one standard deviation below the mean (100-15=85) and 115 is one standard deviation above the mean (100+15=115).

It's difficult to characterize a "genius" explicitly, but some put it at an IQ of about 145+. About what percent of the population are "geniuses" by this criteria?

145 is 3 standard deviations above the mean. We also know that about 99.7% of all individuals have IQ scores within 3 standard deviations. That leaves 0.3% further than 3 standard deviations, so about half of that, or 0.15%, have IQs higher than 145.